Calculating the Events-In-Progress is a very common requirement and many of my fellow bloggers like Chris Webb, Alberto Ferrari and Jason Thomas already blogged about it and came up with some really nice solutions. Alberto also wrote a white-paper summing up all their findings which is a must-read for every DAX and Tabular/PowerPivot developer.

However, I recently had a slightly different requirement where I needed to calculate the Events-In-Progress for Time Periods – e.g. the Open Orders in a given month – and not only for a single day. The calculations shown in the white-paper only work for a single day so I had to come up with my own calculation to deal with this particular problem.

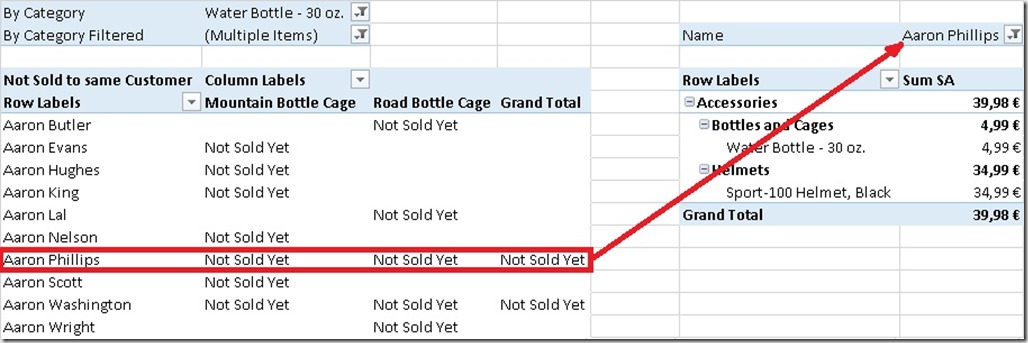

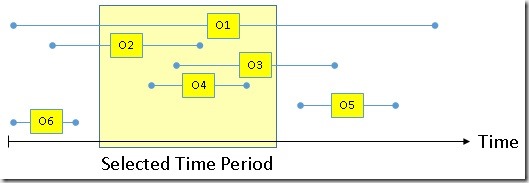

Before we can start we need to identify which orders we actually want to count if a Time Period is selected. Basically we have to differentiate between 6 types of Orders for our calculation and which of them we want to filter or not:

| Order | Definition |

| Order1 (O1) | Starts before the Time Period and ends after it |

| Order2 (O2) | Starts before the Time Period and ends in it |

| Order3 (O3) | Starts in the Time Period and ends after it |

| Order4 (O4) | Starts and ends in the Time Period |

| Order5 (O5) | Starts and ends after the Time Period |

| Order6 (O6) | Starts and ends before the Time Period |

For my customer an order was considered as “open” if it was open within the selected Time Period, so in our case we need to count only Orders O1, O2, O3 and O4. The first calculation you would usually come up with may look like this:

- [MyOpenOrders_FILTER] :=

- CALCULATE (

- DISTINCTCOUNT ( ‘Internet Sales’[Sales Order Number] ),

- FILTER (

- ‘Internet Sales’,

- ‘Internet Sales’[Order Date]

- <= CALCULATE ( MAX ( ‘Date’[Date] ) )

- ),

- FILTER (

- ‘Internet Sales’,

- ‘Internet Sales’[Ship Date]

- >= CALCULATE ( MIN ( ‘Date’[Date] ) )

- )

- )

[MyOpenOrders_FILTER] :=

CALCULATE (

DISTINCTCOUNT ( 'Internet Sales'[Sales Order Number] ), -- our calculation, could also be a reference to measure

FILTER (

‘Internet Sales’,

‘Internet Sales’[Order Date]

<= CALCULATE ( MAX ( 'Date'[Date] ) )

),

FILTER (

‘Internet Sales’,

‘Internet Sales’[Ship Date]

>= CALCULATE ( MIN ( 'Date'[Date] ) )

)

)

We apply custom filters here to get all orders that were ordered on or before the last day and were also shipped on or after the first day of the selected Time Period. This is pretty straight forward and works just fine from a business point of view. However, performance could be much better as you probably already guessed if you read Alberto’s white-paper.

So I integrate his logic into my calculation and came up with this formula (Note that I could not use the final Yoda-Solution as I am using a DISTINCTCOUNT here):

- [MyOpenOrders_TimePeriod] :=

- CALCULATE (

- DISTINCTCOUNT ( ‘Internet Sales’[Sales Order Number] ),

- GENERATE (

- VALUES ( ‘Date’[Date] ),

- FILTER (

- ‘Internet Sales’,

- CONTAINS (

- DATESBETWEEN (

- ‘Date’[Date],

- ‘Internet Sales’[Order Date],

- ‘Internet Sales’[Ship Date]

- ),

- [Date], ‘Date’[Date]

- )

- )

- )

- )

To better understand the calculation you may want to rephrase the original requirement to this: “An open order is an order that was open on at least one day in the selected Time Period”.

I am not going to explain the calculations in detail again as the approach was already very well explained by Alberto and the concepts are the very same.

An alternative calculation would also be this one which of course produces the same results but performs “different”:

- [MyOpenOrders_TimePeriod2] :=

- CALCULATE (

- DISTINCTCOUNT ( ‘Internet Sales’[Sales Order Number] ),

- FILTER (

- GENERATE (

- SUMMARIZE (

- ‘Internet Sales’,

- ‘Internet Sales’[Order Date],

- ‘Internet Sales’[Ship Date]

- ),

- DATESBETWEEN (

- ‘Date’[Date],

- ‘Internet Sales’[Order Date],

- ‘Internet Sales’[Ship Date]

- )

- ),

- CONTAINS ( VALUES ( ‘Date’[Date] ), [Date], ‘Date’[Date] )

- )

- )

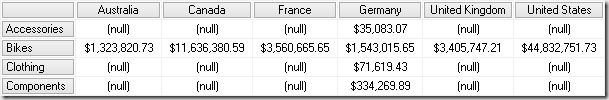

I said it performs “different” as for all DAX calculations, performance also depends on your model, the data and the distribution and granularity of the data. So you should test which calculation performs best in your scenario. I did a simple comparison in terms of query performance for AdventureWorks and also my customer’s model and results are slightly different:

| Calculation (Results in ms) | AdventureWorks | Customer’s Model |

| [MyOpenOrders_FILTER] | 58.0 | 1,094.0 |

| [MyOpenOrders_TimePeriod] | 40.0 | 390.8 |

| [MyOpenOrders_TimePeriod2] | 35.5 | 448.3 |

As you can see, the original FILTER-calculation performs worst on both models. The last calculation performs better on the small AdventureWorks-Model whereas on my customer’s model (16 Mio rows) the calculation in the middle performs best. So it’s up to you (and your model) which calculation you should prefer.

The neat thing is that all three calculations can be used with any existing hierarchy or column in your Date-table and of course also on the Date-Level as the original calculation.

Download: Events-in-Progress.pbix