As you probably know from my last blog post, I am currently upgrading the PowerBI reporting platform of one of my customer from a PowerBI backend (dataset hosted in PowerBI service) to an Azure Analysis Services backend. The upgrade/import of the dataset into Azure Analysis Services itself worked pretty flawless and after switching the connection of the reports everything worked as expected and everyone was happy. However, things got a bit tricky when it came to automatically refreshing the Azure Analysis Services database which was based on an Azure Data Lake Store. For the original PowerBI dataset, this was pretty straight forward as a scheduled refresh from an Azure Data Lake store data source works out of the box. For Azure Analysis Services this is a bit different.

When you build and deploy your data model from Visual Studio, your are prompted for the credentials to access ADLS which are then stored in the data source object of AAS. As you probably know, AAS uses OAuth authentication to access data from ADLS. And this also causes a lot of problems. OAuth is based on tokens and those tokens are only valid for a limited time, by default this is 2 hours. This basically means, that you can process your database for the next 2 hours and it will fail later on with an error message saying that the token expired. (The above applies to all OAuth sources!)

This problem is usually solved by using an Azure Service Principal instead of a regular user account where the token does not expire. Unfortunately, this is not supported at the moment for ADLS data sources and you have to work around this issue.

IMPORTANT NOTE: NONE OF THE FOLLOWING IS OFFICIALLY SUPPORTED BY MICROSOFT !!!

So the current situation that we need to solve is as follows:

- we can only use regular user accounts to connect AAS to ADLS as service principals are not supported yet

- the token expires after 2 hours

- the database has to be processed on a regular basis (daily, hourly, …) without any manual interaction

- manually updating the token is (of course) not an option

Before you continue here, make sure that you read this blog post first: https://blogs.msdn.microsoft.com/dataaccesstechnologies/2017/09/01/automating-azure-analysis-service-processing-using-azure-automation-account/

It describes the general approach of using Azure Automation to process an Azure Analysis Services model and most of the code in this blog post if based on this!

Also this older blog post will be a good read as some concepts and code snippets are reused here.

Back to our example – as we were already using Azure Automation for some other tasks, we decided to also use it here. Also, PowerShell integrates very well with other Azure components and was the language of choice for us. To accomplish our goal we had to implement 3 steps:

- acquire a new OAuth token

- update the ADLS data source with the new token

- run our processing script

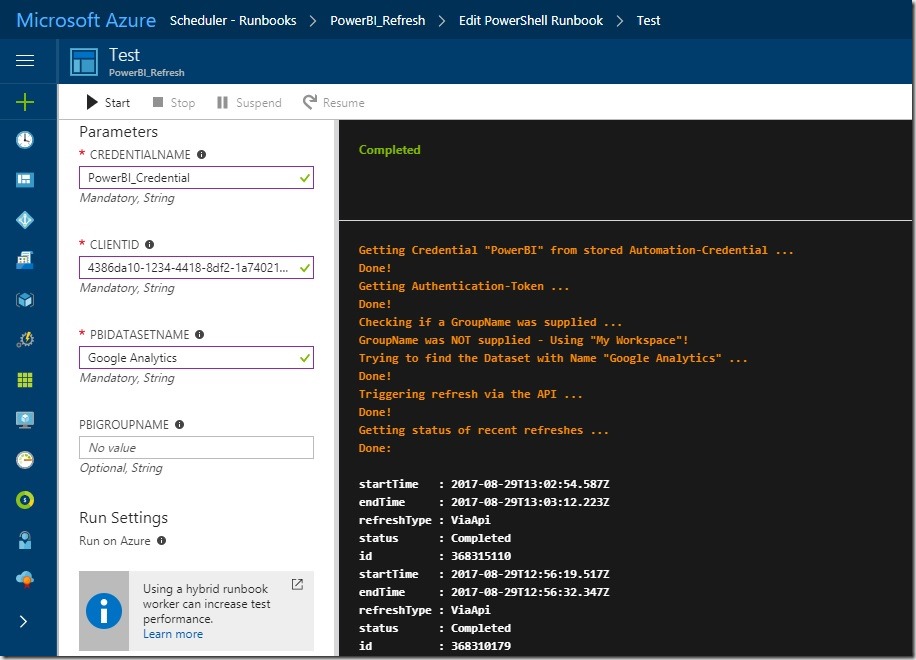

I could copy the code for the first step more or less from one of my older blog post (here) where I used PowerShell to acquire an OAuth token to trigger a refresh in PowerBI.

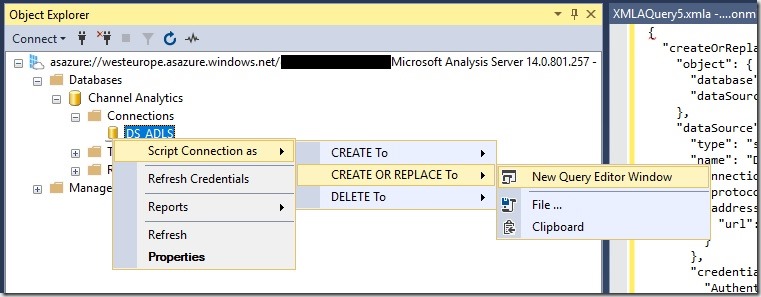

The second step is to update ADLS data source of our Azure Analysis Services model. To get started, the easiest thing to do is to simply open the AAS database in SQL Server Management Studio and let it script the existing datasource for you:

The resulting JSON will look similar to this one:

“createOrReplace”: {

“object”: {

“database”: “Channel Analytics”,

“dataSource”: “DS_ADLS”

},

“dataSource”: {

“type”: “structured”,

“name”: “DS_ADLS”,

“connectionDetails”: {

“protocol”: “data-lake-store”,

“address”: {

“url”: “https://mydatalake.azuredatalakestore.net”

}

},

“credential”: {

“AuthenticationKind”: “OAuth2”,

“token_type”: “********”,

“scope”: “********”,

“expires_in”: “********”,

“ext_expires_in”: “********”,

“expires_on”: “********”,

“not_before”: “********”,

“resource”: “********”,

“id_token”: “********”,

“kind”: “DataLake”,

“path”: “https://mydatalake.azuredatalakestore.net/”,

“RefreshToken”: “********”,

“AccessToken”: “********”

}

}

}

}

The important part for us is the “credential” field. It contains all the information necessary to authenticate against our ADLS store. However, most of this information is sensitive so only asterisks are displayed in the script. The rest of the JSON (except for the “credential” field) is currently hardcoded in the PowerShell cmdlet so if you want to use it, you need to change this manually!

The PowerShell cmdlet then combines the hardcoded part with an updated “credential”-field which is obtained by invoking a REST request to retrieve a new OAuth token. The returned object is modified a bit in order to match the required JSON for the datasource.

Once we have our final JSON created, we can send it to our Azure Analysis Services instance by calling the Invoke-ASCmd cmdlet from the SqlServer module.

Again, please see the original blog post mentioned above for the details of this approach.

After we have updated our datasource, we can simply call our regular processing commands which will then be executed using the newly updated credentials.

The script I wrote allows you to specify which objects to process in different ways:

- whole database (by leaving AASTableName and AASPartitionName empty)

- a single or multiple table and all its partitions (by leaving only AASPartitionName empty)

- or multiple partitions of a single table (by specifying exactly one AASTableName and multiple AASPartitionNames

If multiple tables or partitions are specified, the elements are separated by commas (“,”)

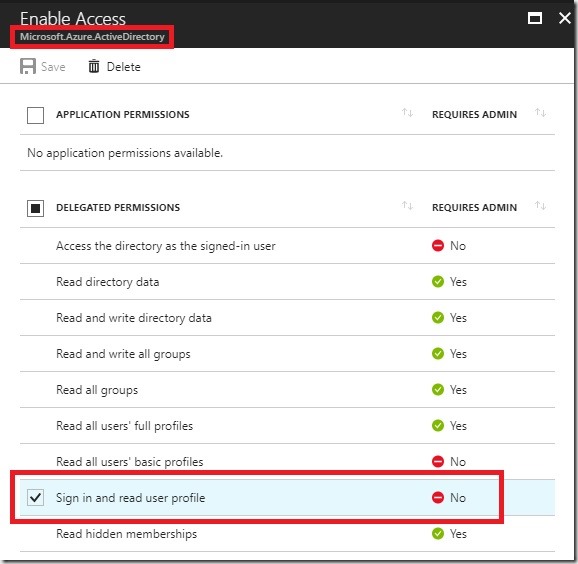

So to make the Runbook work in your environment, follow all the initial steps as described in the original blog post from Microsoft. In addition, you also need to create an Application (Type = “Native”) in your Azure Active Directory to obtain the OAuth token programmatically. This application needs the “Sign in and read user profile” permission from the API “Windows Azure Active Directory (Microsoft.Azure.ActiveDirectory)”:

Also remember the ApplicationID, it will be used as a parameter for the final PowerShell Runbook (=parameter “ClientID”!

When it comes to writing the PowerShell code, simply use the code from the download at the end of this blog post.

For the actual credential that you are using, make sure that it has the following permissions:

- to update the AAS datasource (can be set in the AAS model or for the whole server)

- has access to the required ADLS files/folders which are processed (can be set e.g. via ADLS Data Explorer)

- (if you previously used your own account to do all the AAS and ADLS development, this should work just fine)

In general, a similar approach should work for all kinds of datasources that require OAuth authentication but so far I have only tested it with Azure Data Lake Store!

Download: AAS_Process_OAuth_Runbook.ps1